In January 2003 Jeremiah Grossman disclosed a technique to bypass the HttpOnly1 cookie restriction. He named it Cross-Site Tracing (XST), unwittingly starting a trend to attach "cross-site" to as many web-related vulns as possible.

Unfortunately, the "XS" in XST evokes similarity to XSS (Cross-Site Scripting) which often leads to a mistaken belief that XST is a method for injecting JavaScript. (Thankfully, character encoding attacks have avoided the term Cross-Site Unicode, XSU.) Although XST attacks rely on JavaScript to exploit the flaw, the underlying problem is not the injection of JavaScript. XST is a technique for accessing headers normally restricted from JavaScript.

Confused yet?

First, let's review XSS and HTML injection. These vulns occur because a web app echoes an attacker's payload within the HTTP response body -- the HTML. This enables the attacker to modify a page's DOM by injecting characters that affect the HTML's layout, such as adding spurious characters like brackets (< and >) and quotes (' and ").

Cross-site tracing relies on HTML injection to craft an exploit within the victim's browser, but this implies that an attacker already has the capability to execute JavaScript. Thus, XST isn't about injecting <script> tags into the browser. The attacker must already be able to do that.

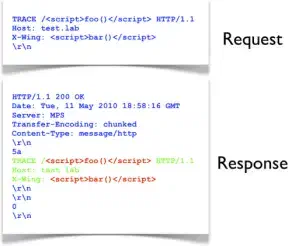

Cross-site tracing takes advantage of the fact that a web server should reflect the client's HTTP message in its respose.2 The common misunderstanding of an XST attack's goal is that it uses a TRACE request to cause the server to reflect JavaScript in the HTTP response body that the browser would then execute. In the following example, the reflection of JavaScript isn't the real vuln -- the server is acting according to spec. The green and red text indicates the response body. The request was made with netcat.

The reflection of <script> tags is immaterial (the RFC even says the server should reflect the request without modification). The real outcome of an XST attack is that it exposes HTTP headers normally inaccessible to JavaScript.

To reiterate: XST attacks use the TRACE (or synonymous TRACK) method to read HTTP headers that are otherwise blocked from JavaScript access.

For example, the HttpOnly attribute of a cookie prevents JavaScript from reading that cookie's properties. The Authentication header, which for HTTP Basic Auth is simply the Base64-encoded username and password, is not part of the DOM and not directly readable by JavaScript.

No cookie values or auth headers showed up when we made the example request via netcat because we didn't include any. Netcat doesn't have the internal state or default headers that a browser does. For comparison, take a look at the server's response when a browser's XHR object makes a TRACE request. This is the snippet of JavaScript:

var xhr = new XMLHttpRequest();

xhr.open('TRACE', 'https://test.lab/', false);

xhr.send(null);

if(200 == xhr.status)

alert(xhr.responseText);

The following image shows one possible response. (In this scenario, we've imagined a site for which the browser has some prior context, including cookies and a login with HTTP Basic Auth.) Notice the text in red. The browser included the Authorization and Cookie headers to the XHR request, which have been reflected by the server:

{: class="img-center" width="300px" height="218px" }

{: class="img-center" width="300px" height="218px" }

Now we see that both an HTTP Basic Authentication header and a cookie value appear in the response text. A simple JavaScript regex could extract these values, bypassing the normal restrictions imposed on script access to headers and protected cookies. The drawback for attackers is that modern browsers (such as the ones that have moved into this decade) are savvy enough to block TRACE requests through the XMLHttpRequest object, which leaves the attacker to look for alternate vectors like Flash plug-ins (which are also now gone from modern browsers).

This is the real vuln associated with cross-site tracing -- peeking at header values. The exploit would be impossible without the ability to inject JavaScript in the first place3. Therefore, its real impact (or threat, depending on how you define these terms) is exposing sensitive header data. Hence, alternate names for XST could be TRACE disclosure, TRACE header reflection, TRACE method injection (TMI), or TRACE header & cookie (THC) attack.

We'll see if any of those actually catch on for the next OWASP Top 10 list.

HttpOnly was introduced by Microsoft in Internet Explorer 6 Service Pack 1, which they released September 9, 2002. It was created to mitigate, not block, XSS exploits that explicitly attacked cookie values. It wasn't a method for preventing html injection (aka cross-site scripting or XSS) vulns from occurring in the first place. Mozilla magnanimously adopted in it FireFox 2.0.0.5 four and a half years later. ↩︎

Section 9.8 of the HTTP/1.1 RFC. ↩︎

Security always has nuance. A request like

TRACE /<script>alert(42)</script> HTTP/1.0might be logged. If a log parsing tool renders requests like this to a web page without encoding it correctly, then HTML injection once again becomes possible. This is often referred to as second order XSS -- when a payload is injected via one application, stored, then rendered by a different one. ↩︎