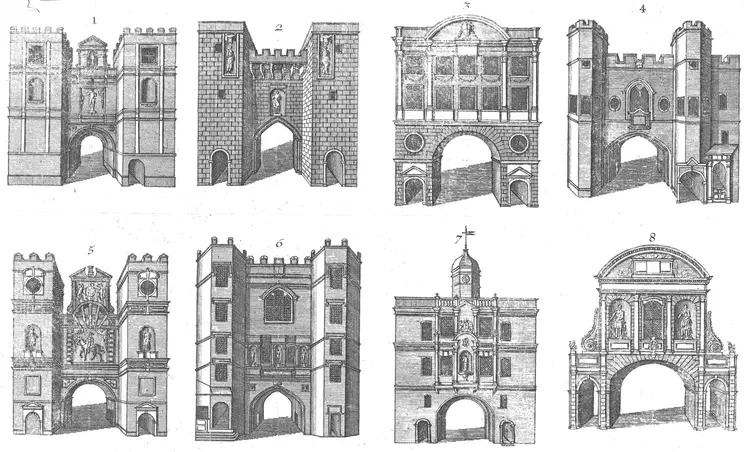

Courtesy British Library (Maps K.Top.27.25)

We covered Cloudflare’s EmDash project as an example of the kind of appsec future I’d like to see. EmDash is the “spiritual successor to WordPress” that has one very specific design choice that caught my eye – sandboxing plugins.

You can’t look at a WordPress plugin without tripping over an XSS, SQL injection, or RCE. Their vulns are ubiquitous and boring. I think we’ve only ever covered maybe two of them on the podcast. WordPress core is relatively secure, but that feels like faint praise when the core can’t protect itself from a plague of plugins with poor security.

Unlike WordPress, where plugins essentially execute unconstrained within the boundaries of the core, EmDash plugins must explicitly state the capabilities they need and are restricted to those capabilities. The article shows an example of a plugin that sends an email. That example highlights several positive security benefits:

- Plugin capabilities are static and inspectable at install time. They can’t dynamically modify themselves or mutate unexpectedly.

- Capabilities not declared are denied. It feels trite to use a phrase like, “Default deny is good” in 2026, but it’s effective and should be the expectation for any component that must have a security boundary.

- Capabilities are simple, expressive, and granular. There are eleven right now, with human-friendly names and the contexts they grant access to.

In WordPress, you have to go through a list of worries about every plugin. What does it actually do? What content, files, or tables does it touch? What network calls does it make? Answering those questions typically relies on grep and trust – the plugin’s reputation and popularity. And those questions need to be asked and answered on every point release considering that a trusted author’s account might be compromised to push a malicious update.

Ember doesn’t erase those concerns, but it definitely minimizes them. It’s much more confidence building to be able to inspect a manifest for “network:fetch” or “network:fetch:any”.

(As an aside, Cloudflare also explains how their Workers, built on top of V8 isolates, are designed for performance and security. That security design also makes them “resistant to the entire range of Spectre-style attacks”, which is another wonderful example of avoiding a vuln class altogether. What I appreciate most about that write-up is how it presents a threat model, then discusses the high-level architecture and processes used to address those threats.)

Of course, there’s some self-interest in Cloudflare creating a project like this. It highlights the security architecture they’ve invested in for their platform – which, obviously, I’m a fan of (this site runs on Cloudflare pages). EmDash also introduces first-class support for the new x402 standard for "internet-native payments." Given that x402 originated from Coinbase, its current state translates to microtransactions with blockchains and stablecoins.

But if financial transactions are going to be part of a CMS, I’d much rather see them on a platform with the plugin security design of EmDash rather than WordPress.

Oh, and yeah, I read through the article a few times to make sure it wasn’t an April Fools’ joke based on its publication date. It’s also coincidentally authored by two Matts – Matt “T.K” Taylor and Matt Kane. They’re not as famous as the Matt of Automattic that owns WordPress.com (the commercial side and contributor to the open-source wordpress.org, who took a quite antagonistic turn towards the open source ethos). I very much prefer the future of CMS design like what EmDash has done.

And that’s why I like EmDash as an example of the future of appsec. It doesn’t even have to be called appsec – and likely won’t. It’s secure software engineering. It’s a project that identified common security failures, evaluated solutions, and created a design that eliminated or minimized broad types of flaws.

I’d rather read this kind of architecture discussion than read about yet another XSS.

(Post adapted from the original one on LinkedIn.)